ADVANCED AUTONOMY

ADVANCED AUTONOMY

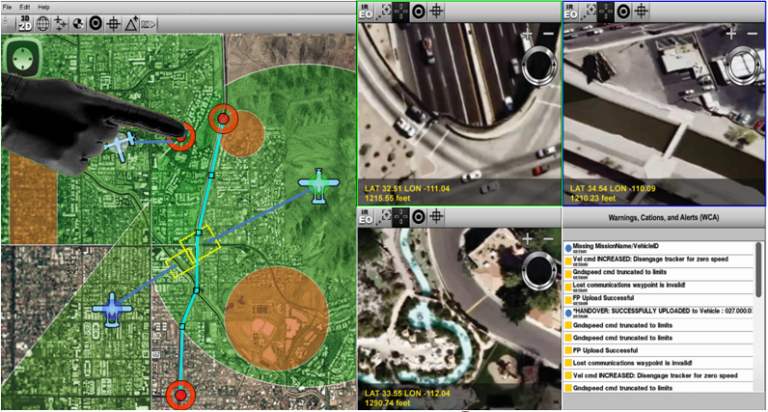

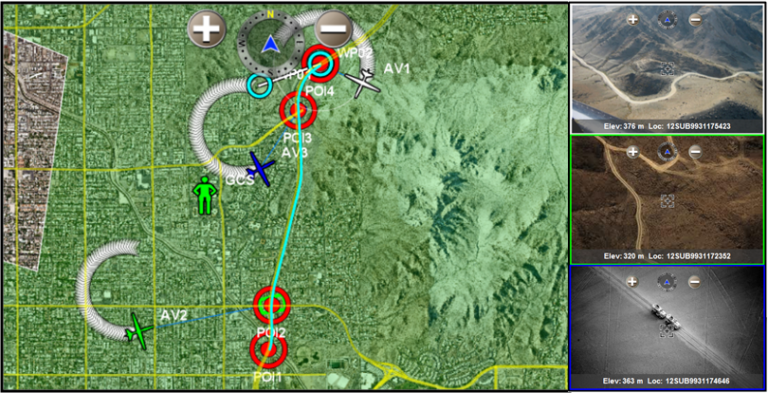

Our auto-router, sensor footprint, radio frequency line-of-sight, and route-and-area survey algorithms can be combined to create a powerful autonomy engine for your unmanned systems.

ADVANCED AUTONOMY

Our auto-router, sensor footprint, radio frequency line-of-sight, and route-and-area survey algorithms can be combined to create a powerful autonomy engine for your unmanned systems.

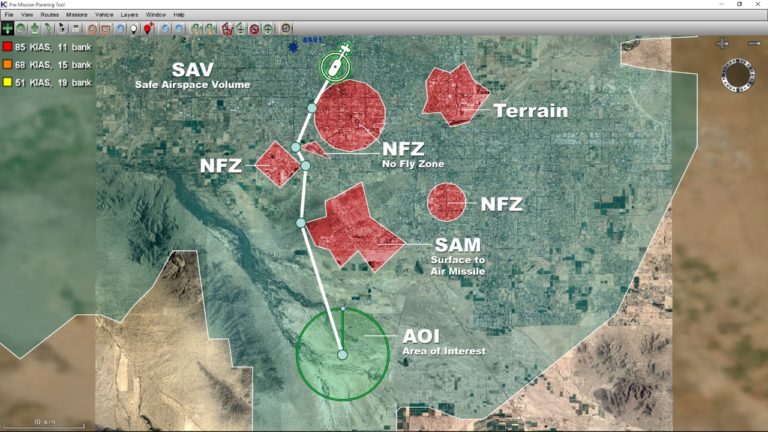

Auto-Routing

This component finds the most efficient path around obstacles in real-time. Kutta’s auto-router has been proven in Monte-Carlo simulations to achieve routing around a randomized mix of 50 to 100 circles and polygons in under an average time of 0.12 msec on an Intel 1 GHz processor. All flight planning modes work in conjunction with the integrated auto-routing algorithm.

Radio Frequency Line-of-Sight Analysis (RF LOS)

The RF LOS component allows an operator to easily define the radio frequency (RF) characteristics of the transmitter and receiver. Using a Digital Terrain Elevation Data (DTED), Kutta’s efficient RF LOS algorithm determines where the unmanned aerial vehicle can be safely controlled. The vertices of the resulting area are provided in modular fashion for display on mapping applications or use by other autonomy services.

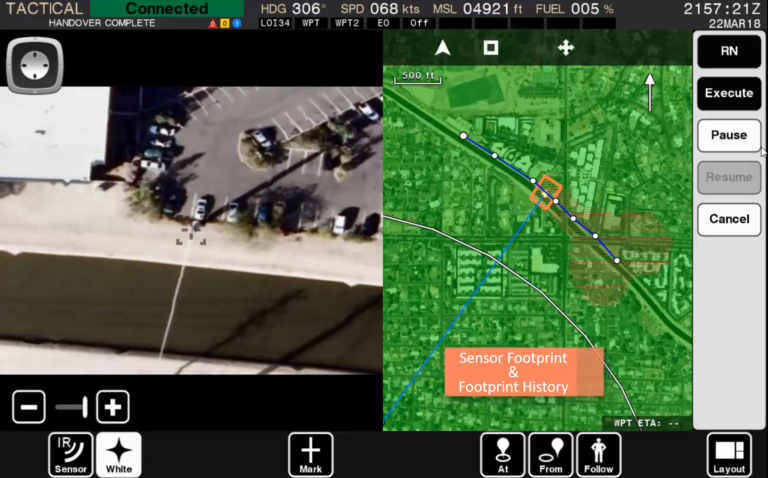

Sensor Footprint and Footprint History

The sensor footprint component gathers real-time input from the unmanned vehicle’s position (latitude, longitude, altitude) and the pan, tilt, zoom parameters of an EO/IR payload to determine the sensor’s actual projection onto the ground. Since the algorithm takes into account Digital Terrain Elevation Data (DTED), the sensor footprint can be overlaid in both 2D and 3D environments, offering exceptional situational awareness of where the sensor has pointed, where it is pointing, and where it is capable of pointing.

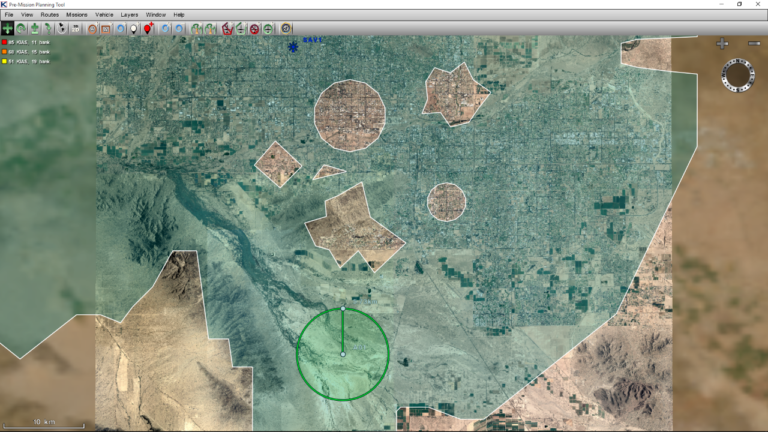

Autonomous Route & Area Survey

This component provides the capability to select a route or designate an area to survey. The algorithm determines an optimum flight plan to keep the unmanned aerial vehicle (UAV) within defined airspace restrictions, avoid terrain, and gather the designated imagery based on pertinent characteristics (i.e. dwell time, image overlap, object detection size, etc.). The component outputs a STANAG 4586 compliant flight plan and a series of payload commands that can be sent to the UAV for execution.

Airspace Management

This component allows a user to input Restricted Operating Zones (ROZs), no-fly zones (NFZ), and flight corridors defined by airspace management authorities. Kutta’s algorithm merges the zones and flight corridors with the RF LOS coverage to define a Safe Airspace Volume (SAV). The result provides a visual reference for the UAV operator. The auto-router described above, can also use the SAV to ensure the UAV stays within the defined SAV.

Flight Planning

Flight Planning allows the operator to insert, delete, and modify speed and heading of waypoints while viewing a 2D or 3D geo-rectified environment. The flight-planning supports both fixed-wing and vertical takeoff and landing UAV. The flight planning component also allows an operator to save and reload any previous flight plans stored on board the Ground Control Station (GCS).

MPEG Metadata Solutions

Kutta utilizes a very efficient, lightweight MPEG hardware encoder to digitize MPEG 1, 2, or 4 video and display in real-time. Our recorded MPEG data contains time-stamped and embedded unmanned vehicle parameters such as the vehicle’s position and payload gimbal position for improved video analysis and playback. When used in conjunction with Kutta’s sensor footprint, the video review mode provides the ability to visualize the vehicle’s location and the payload pointing position relative to the real-time video for improved geo-referenced situational awareness.

Battle Damage Assessment (BDA)

The BDA component allows a user to easily designate standoff distance and direction of photographic and video reconnaissance. A ground location image target is indicated, as well as bearing and standoff distance for the photo/video to be captured. Based upon the Vehicle Specific Module (VSM) for the payload (i.e. fixed or gimbaled), Kutta’s BDA algorithm autonomously determines the waypoints for the air vehicle.